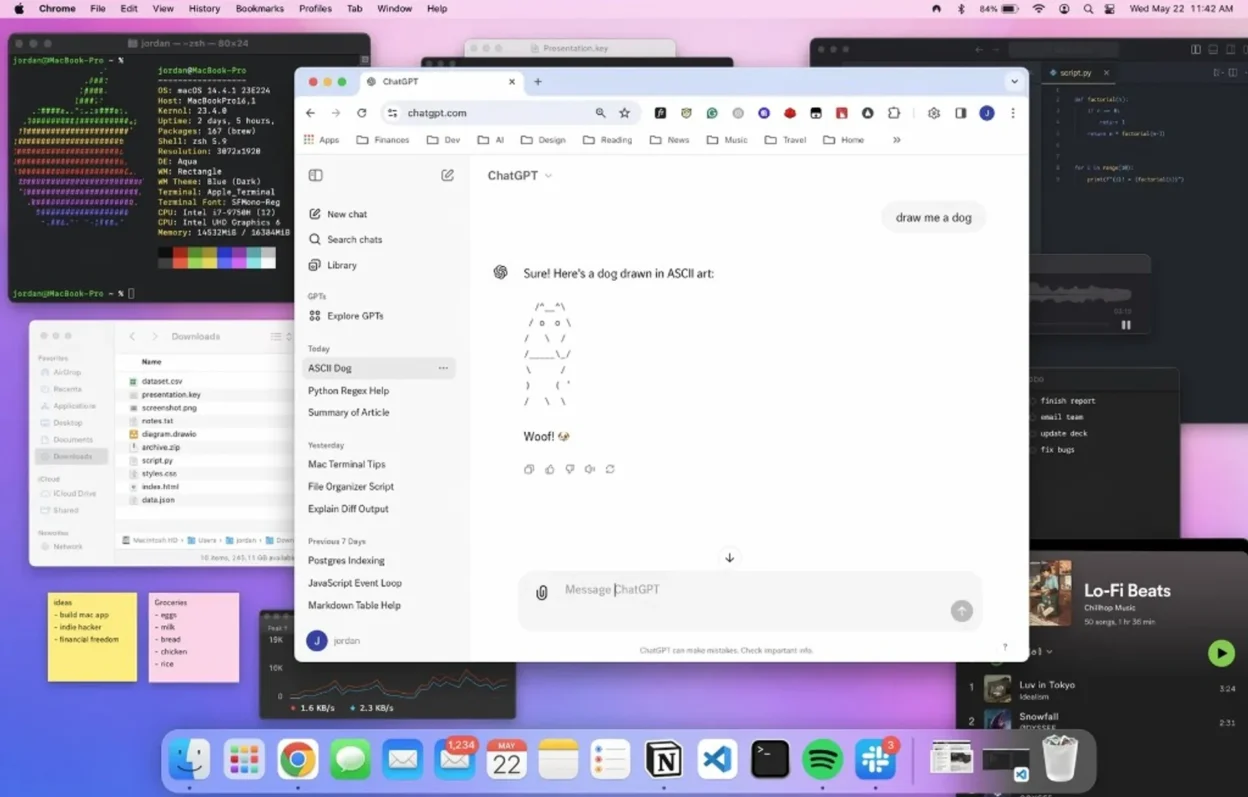

On 21 April 2026, OpenAI announced ChatGPT Images 2.0 a consumer-facing image stack aimed at menus, posters, UI mockups, and other work where legible type and dense layout used to fail first. The same launch wave includes a developer path built around the gpt-image-2 line, with cost tied to quality and resolution on the API side.

What OpenAI says shipped

Take names, positioning, and capability bullets from OpenAI’s own April 2026 announcement of ChatGPT Images 2.0 before you trust social screenshots.

OpenAI frames the upgrade around layouts that used to break generators: tiny copy, icons, busy compositions, and marketing-style deliverables (including multi-panel comics and resized panels) where older consumer models looked clever at a glance but failed on close reading.

For category background on the underlying idea, what generative AI is and what OpenAI is stay the on-site primers.

Why readable type in images matters

Most teams do not need “art” from an image model — they need labels: packaging, slides, fake UI for critique, event posters. When letters turn to mush, the tool fails in the same place every time, no matter how pretty the lighting.

Images 2.0 is positioned as closing that gap enough that downstream editors spend less time re-keying nonsense strings. That is the economic story underneath the launch.

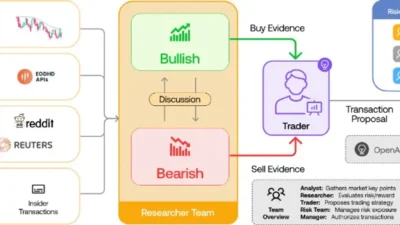

Thinking mode, web, and batches

OpenAI describes thinking capabilities in this product line: reasoning-style passes that can use web search, emit multiple images from one prompt, and review outputs before they are shown — a better fit for asset packs and serialised panels than for chat-speed latency.

Expect slower wall-clock time on heavy jobs than on plain text chat; plan async renders, not live keystroke feedback.

Languages, density, resolution

OpenAI highlights improved handling of non-Latin scripts (for example Japanese, Korean, Hindi, and Bengali in its launch copy) and up to 2K output for fine detail. Neither claim removes the need to proofread: a sharp glyph is not a fact-checked price.

If your prompt depends on post-training world events, web-augmented modes may help — but anything safety- or compliance-critical still needs a human source of truth. A knowledge cutoff in the underlying model still applies unless the product path you use explicitly grounds on fresh data.

Hard limits and buyer hygiene

- Stack transparency: At launch, OpenAI did not spell out the full technical class of the new image stack in the same consumer-facing announcement — treat armchair diagrams of “how it works inside” as guesswork until the company publishes engineering detail.

- Throughput vs quality: Modes that search, plan, and self-check trade time for steadiness.

- Synthetic remains synthetic: Menus, contracts, and data graphics can read cleanly and still be wrong.

Access: ChatGPT, Codex, API

OpenAI ties Images 2.0 to ChatGPT and Codex experiences and advertises a gpt-image-2 API track with pricing that varies by quality and resolution. Exact plan gates and regional rollouts change without a second announcement — confirm in your account and in the vendor’s current API pricing before you promise a client.

Why diffusion-era generators mangled spelling

Classic diffusion models denoise the whole canvas; letterforms are a thin slice of pixels, so the objective can “smooth” text into plausible nonsense that looks like words. Newer systems may blend training objectives and architectures; until OpenAI publishes a technical card for this stack, treat internal guesses as speculation.

Frequently asked questions

Is Images 2.0 just rebranded DALL·E 3?

OpenAI markets it as a new Images tier with different fidelity and workflow goals. Treat API and product names on today’s dashboard as authoritative, not forum shorthand.

Will free accounts get the same “thinking” depth as paid?

OpenAI routinely tiers depth, rate, and resolution by plan. Read the in-app model picker and account limits; do not infer entitlements from a day-one write-up.

Where do I get API prices for gpt-image-2?

From the vendor’s current API pricing documentation only. This article avoids dollar figures because tiers and promos change.

Ship pixels to production only after human proof of prices, dates, legal text, and brand voice — legibility is not accuracy.