Short prompt in, bland paragraph out. Most people still talk to Claude like it is a search box. These ten habits cost almost no extra time and change tone, depth, and usefulness more than swapping tiers or refreshing the model name.

I use Claude across writing, code, rough research, and debugging. The pattern I keep seeing elsewhere: one-line asks, fuzzy success criteria, then disappointment when the reply reads like generic brochure copy.

The gap is rarely “wrong tier.” It is how you talk to the model: scope, structure, and iteration. Below is what actually moved the needle for me — some of it straight from Anthropic’s prompt-engineering docs, some from watching better operators than me, some from boring repetition.

If you are still picking an assistant, start with Claude vs ChatGPT in 2026. If the vocabulary is new, what generative AI is stays the shortest on-ramp.

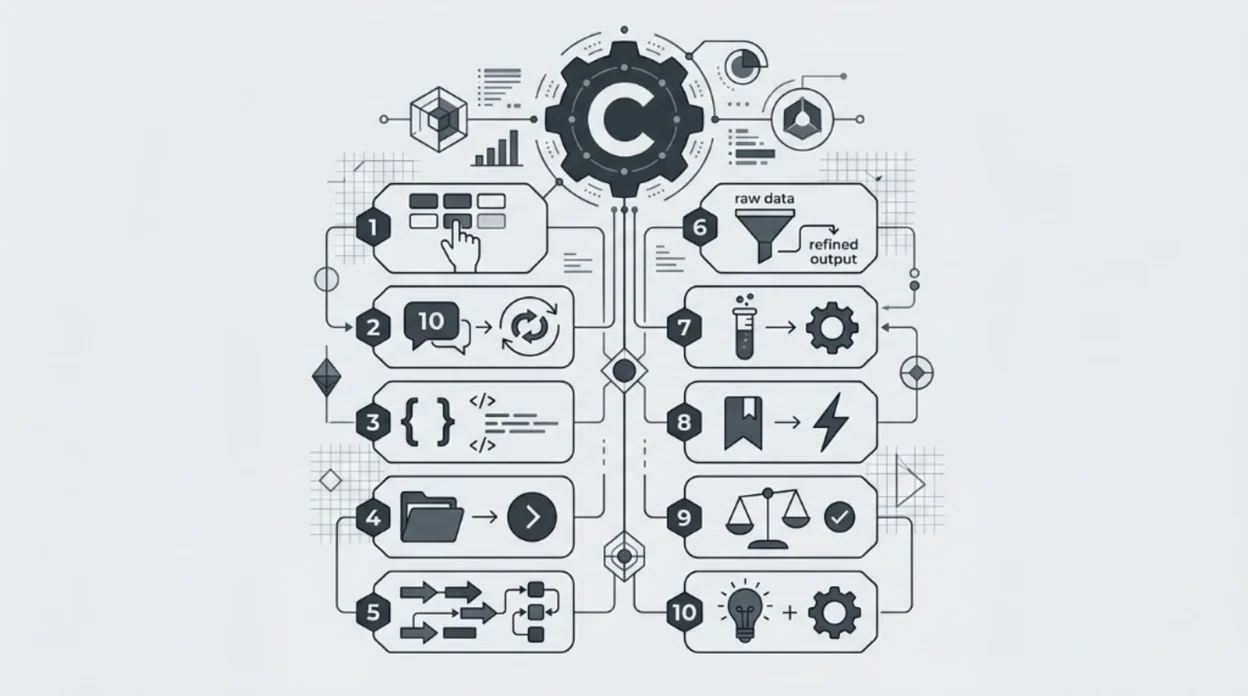

1. Role before task

Five seconds of context beats a page of adjectives after the fact. Instead of opening with “Write an email to my boss about the delay,” anchor who the model should be:

Pattern: You are a senior project manager with fifteen years in software delivery. You are direct, diplomatic, and allergic to blame-shifting. Draft an email to my manager explaining a two-week slip on [project]. Facts: [bullets]. Tone: calm ownership, one clear ask.

Same underlying task, different default vocabulary, risk framing, and level of detail. Try the same question once with no role and once with a tight role — the split is obvious side by side.

2. “Think step by step” with real steps

Tacking “think step by step” onto the end of a blob prompt is a cargo cult. The useful version names the stages you want so the trace is readable when something looks off.

Pattern: Analyse this dataset in order: (1) list missing values by column, (2) flag outliers with IQR on numeric columns only, (3) one-paragraph distribution summary per numeric column, (4) suggest two candidate feature pairs worth correlation checks and say why. Stop after each stage with a short header so I can skim.

Explicit stages make the output easier to audit. You can fix step three without throwing away step one.

3. Few-shot: show the style you want

For anything where voice matters — product blurbs, internal mail, release notes — paste two or three examples you like, then ask for more in that shape. Rhythm, density, and punctuation habits transfer faster than a bullet list of “sound professional.”

Pattern: Here are three on-brand product descriptions I like: [paste]. Write five more for: [SKU list]. Match tone, sentence length, and feature-to-benefit ratio. If you must invent a spec, mark it

[verify].

4. XML-shaped prompts for long asks

When a prompt runs past one dense paragraph, sections bleed into each other. Anthropic’s own materials treat clear structure — including XML-style tags — as a first-class tool. You do not need a schema; you need separation.

<context>…who we are, who the reader is…</context>

<task>…one concrete deliverable…</task>

<constraints>…length, taboos, must-include facts…</constraints>

<tone>…advisor vs sales, warm vs cold…</tone>Claude tends to respect those boundaries better than a single wall of prose. Your edits also get easier: tweak <constraints> and rerun.

5. Ask Claude to punch holes in its own draft

First pass answers the question. Second pass finds the weak joints: skipped assumptions, thin evidence, the objection a sceptic would raise.

Pattern: Critique your previous answer. List: (a) unstated assumptions, (b) weakest claim and why, (c) what a hostile reader would say. Then rewrite the original answer incorporating only fixes that survive your own critique.

For strategy and analysis, that second pass is often where nuance shows up — not from a longer first draft, but from forced disagreement.

6. Treat the thread as a workspace

The best results I get are rarely message one. Round one is directionally right; rounds two through five tighten scope, fix tone drift, and localise examples.

- Round 1: rough draft.

- Round 2: “Section two is generic — rewrite for [industry] with one named constraint each paragraph.”

- Round 3: “Cut the intro by half; lead with the number.”

- Round 4: “Paragraph four shifted voice; match paragraph one.”

Thirty seconds of typing per round beats one heroic mega-prompt that still misses the mark.

7. Constraints beat vague “write a post”

Open-ended asks force a thousand silent defaults. Tight constraints kill the mushy middle: length, audience, taboo words, format, and point of view.

Pattern: ~600 words for senior developers who are sceptical of AI coding tools. Conversational but technically credible. Include one short code example. Do not use the words “revolutionary,” “game-changing,” or “unlock.” End on limitations, not a pep talk.

Reuseable knobs: word cap, reader skill, forbidden vocabulary, paragraph count, “write as if you mildly disagree with the premise.”

8. Rubber-duck debugging (with answers)

Pasting code and saying “fix” sometimes works. More often you want a trace, not a patch you do not understand.

Pattern: I want behaviour Z. Here is the code. Current output is Y. Hypothesis: async ordering around [area]. Walk execution in order, call out where reality diverges from my mental model, then suggest the smallest change that fixes it.

Same structure helps non-code problems: explain the situation, name expected vs actual, ask for a stepwise walk before solutions.

9. A small library of reusable templates

If you rewrite the same skeleton weekly, you are leaking time. Keep a plain list: status mail, code-review checklist, meeting recap, content brief, interview prep. Swap bracketed fields at send time.

Pattern: You are my internal-comms assistant. Weekly team update: wins [insert], blockers [insert], next-week focus [insert]. Upbeat, honest, under 200 words. Do not soften blockers; be direct and constructive.

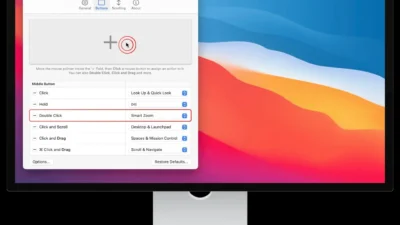

Where Anthropic exposes Projects (or other saved system context), park the stable part of those templates there so you stop re-pasting the boilerplate.

10. Say what you do not want

Models have recurring tics: hedging openers, summary paragraphs nobody asked for, “it is important to note” padding. Negative instructions delete specific failure modes faster than vague “be concise.”

- “No disclaimers unless a claim is legally sensitive.”

- “Skip the intro; start at heading one.”

- “No closing summary paragraph.”

- “Avoid ‘it is worth noting,’ ‘in conclusion,’ and ‘leverage.’”

Where the product offers saved preferences or memory-style settings, put your standing “do not” list there once instead of repeating it every session — check current Claude help for what your plan actually supports.

Frequently asked questions

Do I need a paid plan for this to work?

No. These habits improve output on any tier that can run the model. Paid tiers mainly change limits (context length, rate, attachments). Check Anthropic’s current plan table for what you actually have before you design a workflow around huge files or nonstop back-to-back turns.

Is “think step by step” still worth saying?

Only if you pair it with named steps you can inspect. A slogan at the end of a vague prompt does not add structure; an ordered checklist does.

Do XML tags have to be valid XML?

No. You are using tags as human and model-visible section headers. Consistent names matter more than a schema. Anthropic documents this pattern under prompting best practices.

Will this fix hallucinations?

It reduces sloppy outputs and makes mistakes easier to spot. It does not turn the model into a fact oracle. For anything high-stakes, keep verification habits: primary sources, dates, and your own domain checks.

Pick two habits you do not use yet. Run them on a real task this week. Momentum beats collecting more “cheat sheets.”